The Architecture of Post-Screen Software

The GUI is a legacy paradigm. The future of software is a conversation with autonomous agents that get things done.

For decades, the graphical user interface (GUI) has been the primary medium through which humans interact with computers. We click, we drag, we type in boxes. In the world of data, this paradigm culminated in the business intelligence (BI) dashboard, a tool that promised to democratize data by making it visual. For a time, it worked. But the dashboard, once a revolutionary window into business operations, has hit a glass ceiling. It represents a static, rear-view mirror on the world, and its limitations are becoming critical failures in an economy that demands real-time, autonomous action.

This isn’t just a matter of suboptimal tooling; it’s a systemic drain on our most valuable resource: human talent. The very tools meant to empower knowledge workers are often the source of their biggest productivity sinks. Studies consistently show a staggering amount of time wasted on what is often called “work about work.” Knowledge workers can spend up to 60% of their time on non-value-added tasks like searching for information, managing emails, and switching between applications . For data scientists, the problem is even more acute. The infamous “80/20 rule” of data science suggests that as much as 80% of their time is spent not on analysis or modeling, but on the janitorial work of finding, cleaning, and preparing data .

This is the “interface tax” in action — the immense, often hidden, cost of forcing skilled professionals to act as manual APIs, shuttling information between systems that don’t speak to each other. Even when the data is pristine and the dashboard is beautifully rendered, it can still fail at its ultimate purpose: driving decisions. A 2025 Gartner study cited by CIO.com found that a staggering 58% of business decision-makers still rely on “gut feel” or experience rather than data-driven insights . The dashboard, in many cases, has become what some analysts call “metric theater” — a proliferation of charts that provide the illusion of insight without a clear, direct path to action.

The Agent-Oriented Architecture: A New Foundation

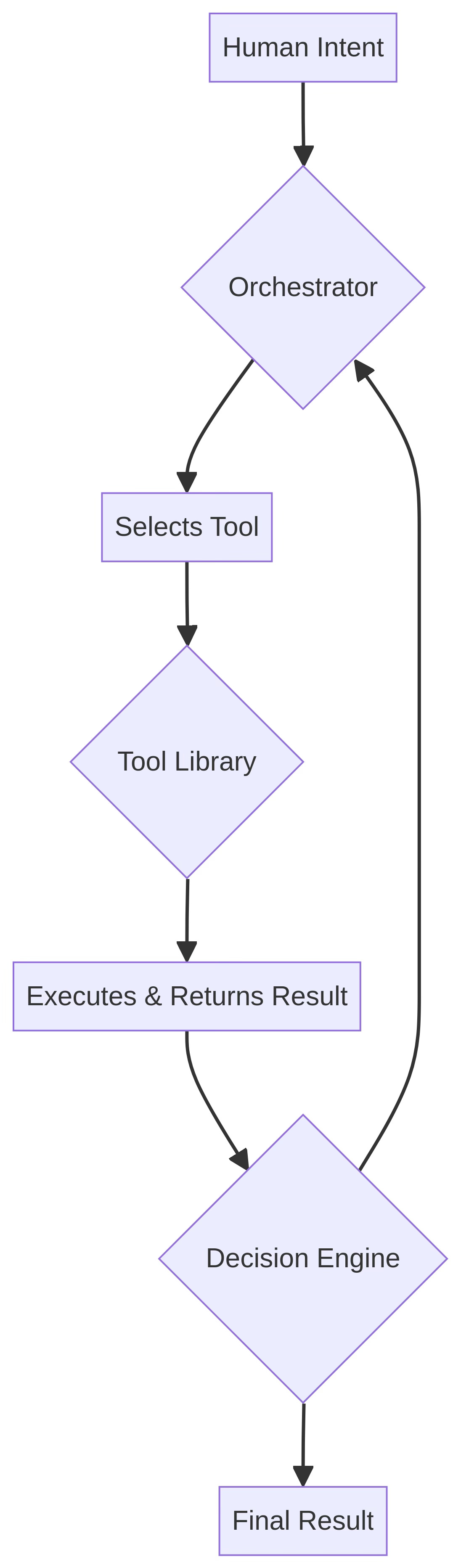

The successor to the dashboard is not a better, faster dashboard. It is a fundamentally different architecture built around AI agents. This agent-oriented model inverts the traditional relationship between human and computer. Instead of a human navigating a rigid, pre-defined interface to pull information, the human simply states their intent, and an autonomous agent orchestrates the necessary tools and workflows to fulfill it.

This new architecture is composed of several key, interlocking components that together create a system capable of understanding goals and taking action.

Below are the core components of an agentic system.

Intent Engine

Captures the user’s goal, increasingly through natural language (typed or spoken). This is the entry point for any task.

This is the system’s “ear.” It has to be brilliant at understanding what you mean, not just what you say, translating messy human language into a clear, machine-readable goal.

Orchestrator

A central agent, often called a “planner,” that decomposes the high-level intent into a sequence of concrete steps or a complex dependency graph.

This is the “brain” of the operation. It’s the master strategist that looks at the goal and figures out the step-by-step recipe to get there, like a project manager for the AI.

Tool Library

A collection of discrete, well-defined functions (APIs) that agents can call to interact with the world (e.g., query a database, call a web service, run a script).

These are the agent’s “hands.” Each tool does one thing and does it well. By giving the agent a rich library of tools, you give it the ability to perform a wide range of actions.

State Tracker

A mechanism for maintaining the context of a task over time, allowing the agent to reason about multi-step processes and remember what it has already done.

This is the agent’s “memory.” Without it, every step would be independent. The state tracker lets the agent have a coherent, multi-step “thought process.”

Decision Engine

A module that synthesizes the outputs from various tool calls and determines the next best action, adapting the plan as new information becomes available.

This is the agent’s “judgment.” After a tool runs, the decision engine looks at the result and decides, “What now?” Does it run another tool? Ask a clarifying question? Or is the task complete?

This architecture creates a dynamic and continuous loop of action and observation, allowing the system to navigate complex tasks autonomously.

This model is not a far-future concept. It is the core pattern underlying the most advanced AI systems emerging in 2025 and 2026, from OpenAI’s dedicated Codex App to Anthropic’s terminal-native Claude Code .

Technical Deep Dive: Code Over Clicks

The true power of the agentic model lies in its foundational principle: building with APIs, not GUIs. A graphical interface is a monolithic, human-centric interpretation of a workflow. It is inherently rigid, tightly coupled to a specific presentation layer, and difficult to automate. An API (Application Programming Interface), by contrast, is a composable, machine-first unit of functionality. This distinction has profound implications for creating scalable, robust, and flexible systems.

To make this concrete, let’s walk through a simplified example in Python. Even if you don’t code, the concepts here are key to understanding how agents work. We’re going to build a tiny financial agent. The goal is to create a system that can understand a user’s request for a stock price and then use a “tool” to get that information.

First, we need a tool. In the agentic world, a “tool” is just a function — a self-contained block of code that performs a specific task. Our tool will be a function called get_stock_price. For this example, it will just return a fixed price for a specific stock symbol, but in a real-world application, this function would make a network request to a live financial data service.

Second, we need the agent itself. We’ll create a Python class called Agent. This class will have a “toolbox” — a list or dictionary of the tools it knows how to use.

Finally, the agent needs a way to process a user’s request and decide which tool to use. This is the job of the Orchestrator and Decision Engine. In our simple example, we’ll simulate this with a basic if statement. In a real AI agent, this is where a powerful Large Language Model (LLM) would analyze the user’s goal and intelligently select the right tool from its toolbox.

Here is what that looks like in code:

import json

# This is our "Tool" - a function the agent can call.

def get_stock_price(symbol: str) -> str:

"""A dummy function to get a stock price. In a real scenario, this would call a financial API."""

if symbol == "MANU":

return json.dumps({"symbol": "MANU", "price": 125.50})

else:

return json.dumps({"error": "Symbol not found"})

# This is our "Agent"

class Agent:

def __init__(self):

# The agent has a "toolbox" of available tools.

self.tools = {

"get_stock_price": get_stock_price

}

# This method simulates the Orchestrator/Decision Engine.

def process_intent(self, user_intent: str):

# An LLM would normally parse the intent. Here, we use a simple rule.

if "price of MANU" in user_intent:

print("Agent decided to call the 'get_stock_price' tool.")

result = self.tools["get_stock_price"]("MANU")

print(f"Tool Result: {result}")

else:

print("Agent could not determine which tool to use.")

# --- Execution ---

agent = Agent()

agent.process_intent("What is the current price of MANU?")Even if you skipped reading the code, the output tells the story. When we run it with the request, “What is the current price of MANU?”, the agent correctly identifies the intent, calls the appropriate tool, and gets the result. This simple pattern — of an agent possessing a set of tools and a mechanism to decide which one to use based on intent — is the fundamental building block of post-screen software. By exposing capabilities as a library of tools, developers can create systems that are far more dynamic. An agent can chain these tools together in novel combinations to solve problems the original developers may never have even anticipated.

Like Claude Code on Steroids

The ultimate evolution of the intent engine is the removal of the keyboard itself. The keyboard is a profound bottleneck between the human brain and the digital world. As Fabio Moioli, a leader at the executive search firm Spencer Stuart, wrote in a prescient January 2026 article, “The most underappreciated limiting factor to AGI-level productivity is human typing speed... We are limited by our fingers” .

The QWERTY layout, an artifact designed over a century ago with the express purpose of slowing down mechanical typists to prevent jams, is an absurdly low-bandwidth interface for interacting with systems that operate at the speed of light . Voice, in contrast, is a high-bandwidth medium that mirrors the non-linear, associative, and often recursive way we think.

This is the trajectory the industry is on: “Claude Code on steroids.” It’s not just about coding; it’s about expressing complex, multi-layered intent through natural, fluid conversation. In March 2026, Anthropic took a major step in this direction by launching a voice mode for Claude Code, allowing developers to direct the agent through spoken commands . This isn’t a mere convenience; it’s a paradigm shift. It closes the gap between thought and execution to a degree that was previously science fiction.

Imagine an engineer, instead of typing, simply speaking their intent in a stream of consciousness: “Okay, let’s refactor the entire authentication service. It needs to support passkeys, and we should probably cache the user profile in Redis for five minutes to reduce database hits. While you’re at it, scan for any obvious security vulnerabilities in the session management, and make sure the test coverage doesn’t drop below 90%. Let me know what you find before you commit anything.” In this scenario, an agent (or a team of agents) orchestrates that entire complex workflow. The screen becomes a secondary, optional display for verification and high-level oversight, not the primary, mandatory site of work.

Connecting to the “Agent Factory”

This architectural shift directly enables the vision of an “agent factory.” The factory’s output is not monolithic applications, but a portfolio of specialized agents and a robust, ever-growing library of tools they can use. The architecture described here is the operating system for that factory.

The Tool Library is the factory’s inventory of parts — discrete, versioned, and well-tested APIs that any agent can call. The Orchestrator is the assembly line manager, pulling parts as needed and sequencing them according to the task at hand. The Decision Engine is the quality control system, ensuring each step aligns with the overall goal and adapting the plan when something unexpected occurs.

Building this architecture is how you scale the production of intelligent systems, moving from crafting individual applications to manufacturing autonomous capabilities.

The Developer’s New Role

In this post-screen world, the role of the software developer undergoes a profound transformation. The focus shifts from crafting pixel-perfect user interfaces to building and maintaining the robust, reliable tools that agents consume. The most valuable engineering work will not be in the frontend directory, but in the design of well-documented, idempotent, and observable APIs.

The new frontier is orchestration. The elite developers of tomorrow will be those who can design, debug, and manage complex systems of interacting agents. Their primary skill will be not just writing code, but shaping the behavior of AI systems that write code. The dashboard is dead. The future is a conversation — and the software that can listen, understand, and act will win.

Reminds me of the classic Mitt Romney quote: "Corporations are people too, my friend."

Perhaps these agents will generally be more like people, too.

Exciting (and scary) times!