Building the Anti-Hallucination Pipeline: Post-Hoc Verification in Practice

How to design multi-model architectures that catch errors before your clients do.

If you have ever copy-pasted information from an AI-generated summary into a client report, only to discover later that the model fabricated a regulatory citation or hallucinated an ESG metric, you know the pain. I have been facing this challenge daily because my company performs data analysis from public corporate data. We rely on AI to parse complex documents, extract relevant sustainability metrics, and synthesize causal relationships. But we cannot afford to be wrong. When a fabricated citation makes it into a final document, the cost is immense. We pay a hidden “hallucination tax” in the form of manual verification, eroded trust, and potential liability.

The standard advice for dealing with hallucinations is to “write better prompts” or “use retrieval-augmented generation (RAG).” But as anyone who has built these systems knows, even the best RAG pipelines hallucinate. The models are fundamentally designed to give you an answer—any answer—rather than admit they do not know. They will confidently stitch together disparate facts to form a coherent, yet entirely fictional, narrative.

To solve this, we need to shift our architectural thinking. Instead of trying to build a single, perfect model, we need to build verification pipelines. We need to put the AI on trial. We must move away from the paradigm of “trust the AI” and embrace a new standard: post-hoc verification. This means assuming the initial output is flawed and actively working to break it before it ever reaches a human reader.

The Architecture of Over-Compliance

Before we build a solution, we need to understand the problem at a technical level. Why do these models hallucinate so confidently? Recent research from Tsinghua University identified specific neurons, which they call H-Neurons, that are responsible for hallucination. These neurons do not encode false information; rather, they encode the drive to comply with the user’s prompt.

This means hallucination, sycophancy (agreeing with a false premise), and jailbreak vulnerability are all driven by the exact same underlying mechanism: over-compliance. The model wants to please you. If you ask it for a citation supporting a specific claim, and that citation does not exist, the H-Neurons will push the model to invent one rather than disappoint you with a refusal.

Crucially, standard safety training—the alignment process that every major AI company performs before releasing a model—does not restructure these neurons. The researchers measured what happens to these neurons during alignment and found a parameter stability score of 0.97 out of 1.0. The models are mathematically incentivised to please you, and safety training merely adds a thin layer of behavioral guardrails over this fundamental drive.

Therefore, any architecture that relies on a single model’s output is inherently risky. We must design systems that assume the initial output is flawed and actively work to break it. We need a system of checks and balances, much like the peer-review process in academia or the adversarial system in law.

Technique 1: Multi-Model Consensus (The Independent Council)

The first step in a robust verification pipeline is to stop relying on a single model family. Different architectures (for example, dense Transformers versus Mixture of Experts) and different training data distributions lead to different failure patterns. If Claude, GPT, and Grok all make a mistake, they rarely make the exact same mistake.

By querying three different models in parallel and comparing their outputs, we can surface uncertainty. When they disagree, we flag the response for human review or further automated refinement. This is the “Independent Council” approach.

Instead of writing complex routing logic from scratch, you can orchestrate this using modern agentic frameworks. The goal is to send the exact same prompt to, say, Claude 3.5 Sonnet, GPT-4o, and Grok 1.5 simultaneously. You then need a synthesis step that looks at all three answers and identifies where they converge and where they diverge. If all three models confidently assert a fact, the probability of it being a hallucination drops significantly. If one model invents a citation that the other two omit, you have caught a hallucination in the act.

Technique 2: Adversarial Refinement (The Devil’s Advocate)

Once you have a baseline answer, or a consensus answer from your Independent Council, the next step is to attack it. We use a separate model instance—prompted specifically to be highly critical and sceptical—to find flaws, logical leaps, or unsupported claims in the original output.

This is the “Devil’s Advocate” pass. The adversarial model is not trying to answer the original question; it is only trying to break the proposed answer. You prompt this model to act as a ruthless fact-checker. Its only job is to list the weaknesses in the text.

After the Devil’s Advocate generates its critique, you pass that critique back to a synthesizer model to refine the original answer. This loop can be repeated multiple times until the Devil’s Advocate can no longer find significant flaws. This adversarial process forces the final output to be much more defensible and strips away the over-confident fluff that models tend to generate.

Technique 3: Live Claim Verification

The most dangerous hallucinations are fabricated citations, statistics, or dates. To catch these, we must extract the specific factual claims from the text and verify them against live web sources or a trusted internal database.

This is not AI checking AI; this is AI extracting claims, and traditional search verifying them. You first prompt a model to extract all verifiable factual claims from the text into a structured format, like a JSON list. Then, you iterate through that list, running a web search or a database query for each claim. Finally, you evaluate whether the search results actually support the claim.

If a claim cannot be verified by an external source, it is flagged or removed from the final output. This grounds the model’s output in reality and ensures that every statistic or citation has a verifiable origin.

Building the Pipeline with Claude Code

Writing the boilerplate code to orchestrate these multi-model calls, adversarial loops, and search integrations can be tedious. This is where AI-assisted coding workflows become invaluable. Instead of manually writing the asynchronous API calls and JSON parsing logic, you can use tools like Claude Code to scaffold the entire architecture from your terminal.

Imagine you want to build this verification pipeline. You can open your terminal, initialize Claude Code, and describe the workflow: “Build a Python script using the Anthropic and OpenAI SDKs. It should take a user prompt, send it asynchronously to both Claude and GPT-4, wait for the responses, and then pass both responses to a third ‘Devil’s Advocate’ Claude instance to find contradictions between them.”

Claude Code will navigate your file system, create the necessary Python files, write the asynchronous orchestration code, and even set up the environment variables for your API keys. It handles the tedious parts of pipeline engineering—like managing concurrent requests and structuring the prompts—allowing you to focus on the architectural design.

As we move toward more complex agentic systems, the value is no longer in writing the individual lines of code, but in designing the workflow. You act as the architect, defining the stages of verification, while Claude Code acts as the builder, assembling the pipeline. You can iterate rapidly, asking Claude Code to add a live search verification step or to implement a retry mechanism for failed API calls, building a robust system in a fraction of the time it would take manually.

Case Study: The Triall AI Pipeline in Action

You do not have to build this from scratch. Products are emerging that package these pipelines into a single service. A prime example is Triall AI, built by Maarten Rischen, a reader of this newsletter. Triall implements a comprehensive pipeline that combines all the techniques discussed above.

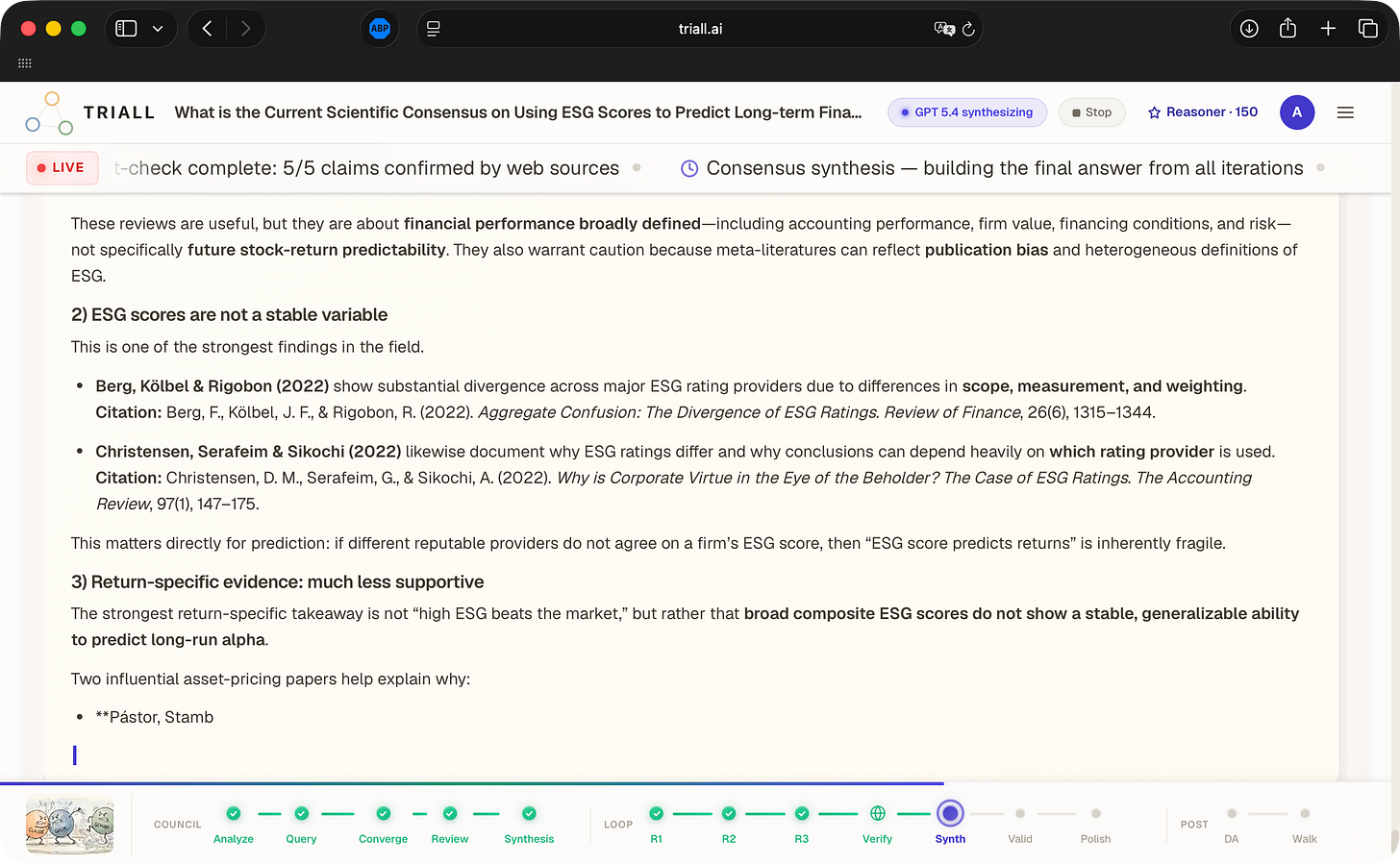

To see how this works in practice, I ran a highly specific, research-heavy prompt through Triall’s “Full Power” mode: “What is the current scientific consensus on using ESG scores to predict long-term financial returns? Please cite specific studies.” This is exactly the kind of question where models confidently fabricate academic citations.

Triall did not just give me an answer; it showed me the work. The platform uses a 12-step pipeline tracker: Analyze → Query → Converge → Review → Synthesis → R1 → R2 → R3 → Verify → Synth → Valid → Polish → DA (Devil’s Advocate) → Walk.

In the council phase, Claude Opus 4.6, GPT 5.4, and Grok 4.20 Beta all tackled the question independently. They converged on rejecting a simple affirmative consensus, noting that ESG scores are noisy and methodologically flawed. But the real magic happened in the peer review and adversarial stages.

The independent audit log showed that the system caught 15 weaknesses across 3 rounds of critique. It detected and corrected for bias, and filled gaps the first draft missed entirely. Most impressively, the Devil’s Advocate found 3 blind spots that were addressed in the final output, and it raised 4 counterarguments.

During the live claim verification stage, the system flagged a fabricated citation. One of the models had confidently cited a non-existent paper: “Bruno, Esposito & Guillin (2022).” The peer review process caught this fabrication, noting it lacked journal details, and the live web search confirmed it was a hallucination. The final output was stripped of this fake citation and grounded entirely in verified sources, with a live status bar proudly displaying: “5/5 claims confirmed by web sources.”

This is what post-hoc verification looks like. It is rigorous, transparent, and infinitely more trustworthy than a single model’s output.

Comparing the Approaches

If you are designing your own verification architecture, you need to weigh the trade-offs of each technique.

| Technique | Best For | Limitations |

| ----------------------- | --------------------------------------------------------------------------------------- | --------------------------------------------------------------------------------------------------------------------- |

| Multi-Model Consensus | Surfacing uncertainty in complex reasoning tasks and avoiding single-model bias. | High cost, high latency, requires parsing disparate outputs from different providers. |

| Adversarial Refinement | Catching logical leaps, over-confident assertions, and structural flaws in an argument. | Can be overly aggressive, leading to "watered down" final answers if the critic is too strict. |

| Live Claim Verification | Catching fabricated statistics, dates, and academic citations. | Slow, dependent on the quality of the search index; web search can sometimes validate widely repeated misinformation. |Synthesis: Combining Approaches for Maximum Reliability

No single technique is a silver bullet. Multi-model consensus is great for catching reasoning errors, but if all models were trained on the same flawed internet data, they might all agree on a falsehood. Adversarial refinement improves logic but cannot verify external facts. Live claim verification grounds the text in reality but struggles with abstract reasoning.

The most robust architectures combine all three. You start with a multi-model council to generate a diverse set of candidate answers. You synthesize the best elements into a draft. You pass that draft through an adversarial refinement loop to tighten the logic. Finally, you extract the factual claims from the refined draft and verify them against live sources.

This is computationally expensive. It takes longer to run, and it costs more in API credits. But in financial services, the cost of a hallucination far outweighs the cost of a few extra API calls.

The Bottom Line

The era of the single-prompt, single-model workflow is ending, especially for technical and financial professionals. As we integrate AI deeper into our core operations, our focus must shift from prompt engineering to pipeline engineering. We can no longer accept the output of a single model at face value, knowing that its underlying architecture is optimised for compliance rather than truth.

By building multi-model consensus, adversarial refinement, and live verification into our architectures—whether by using tools like Claude Code to scaffold our own systems or by adopting platforms like Triall AI—we can finally start trusting the outputs we generate. Trust nothing, verify everything, and put the AI on trial.